Qwen3.6-35B-A3B

Posted: Sat Apr 18, 2026 6:05 am

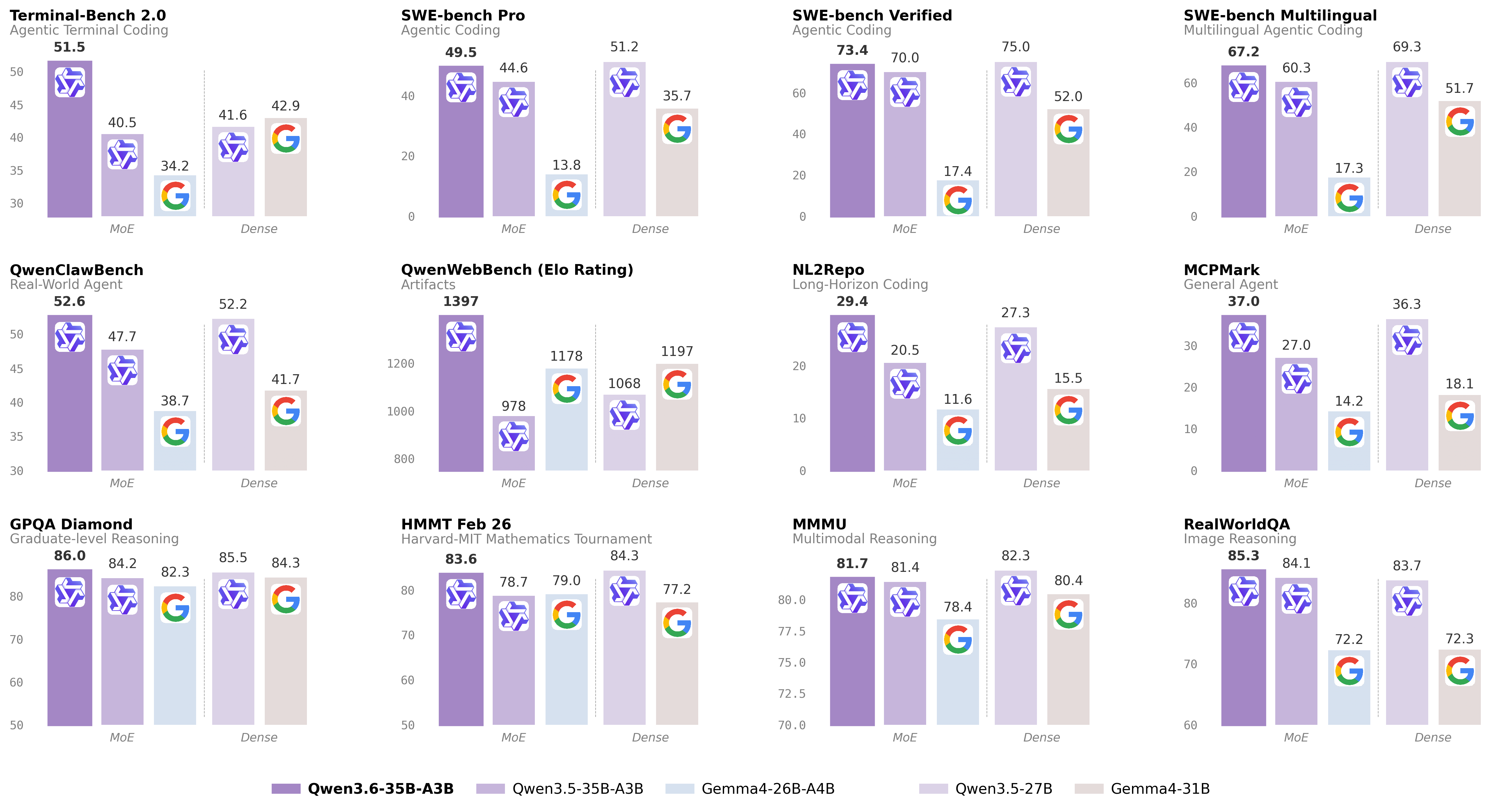

I did some testing via llama.cpp on a 4070 mobile with 8GB of VRAM and the model runs at 30t/s which is really not bad. It does slow down to 2ot/s once the context hits around 15,000 tokens with a context limit of 32k. It's crazy what we can do locally nowadays.Following the February release of the Qwen3.5 series, we're pleased to share the first open-weight variant of Qwen3.6. Built on direct feedback from the community, Qwen3.6 prioritizes stability and real-world utility, offering developers a more intuitive, responsive, and genuinely productive coding experience.

Qwen3.6 Highlights

This release delivers substantial upgrades, particularly in

Agentic Coding: the model now handles frontend workflows and repository-level reasoning with greater fluency and precision.

Thinking Preservation: we've introduced a new option to retain reasoning context from historical messages, streamlining iterative development and reducing overhead.

Model: https://huggingface.co/Qwen/Qwen3.6-35B-A3B

GGUF: https://huggingface.co/unsloth/Qwen3.6-35B-A3B-GGUF